Features

- 01

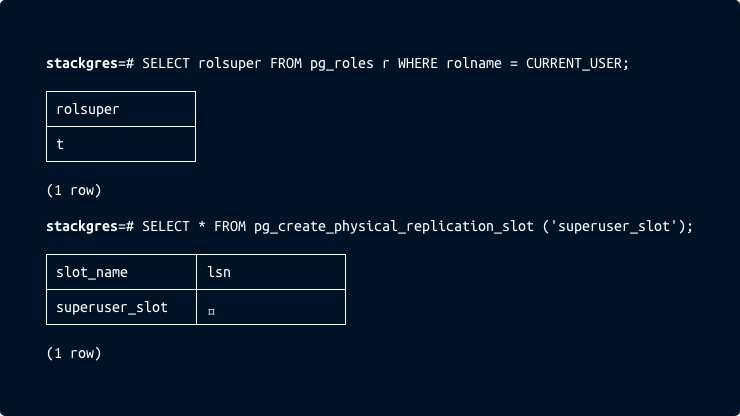

You are in full control. You are the

postgresuserDo you want a Postgres database with no restrictions? You get it. No pseudo limited users, you get the

postgresuser (maximum privileged user, the “root” user). You own it. No caveats.StackGres is a complete Postgres solution, integrating several components, with expertly tuned configurations.

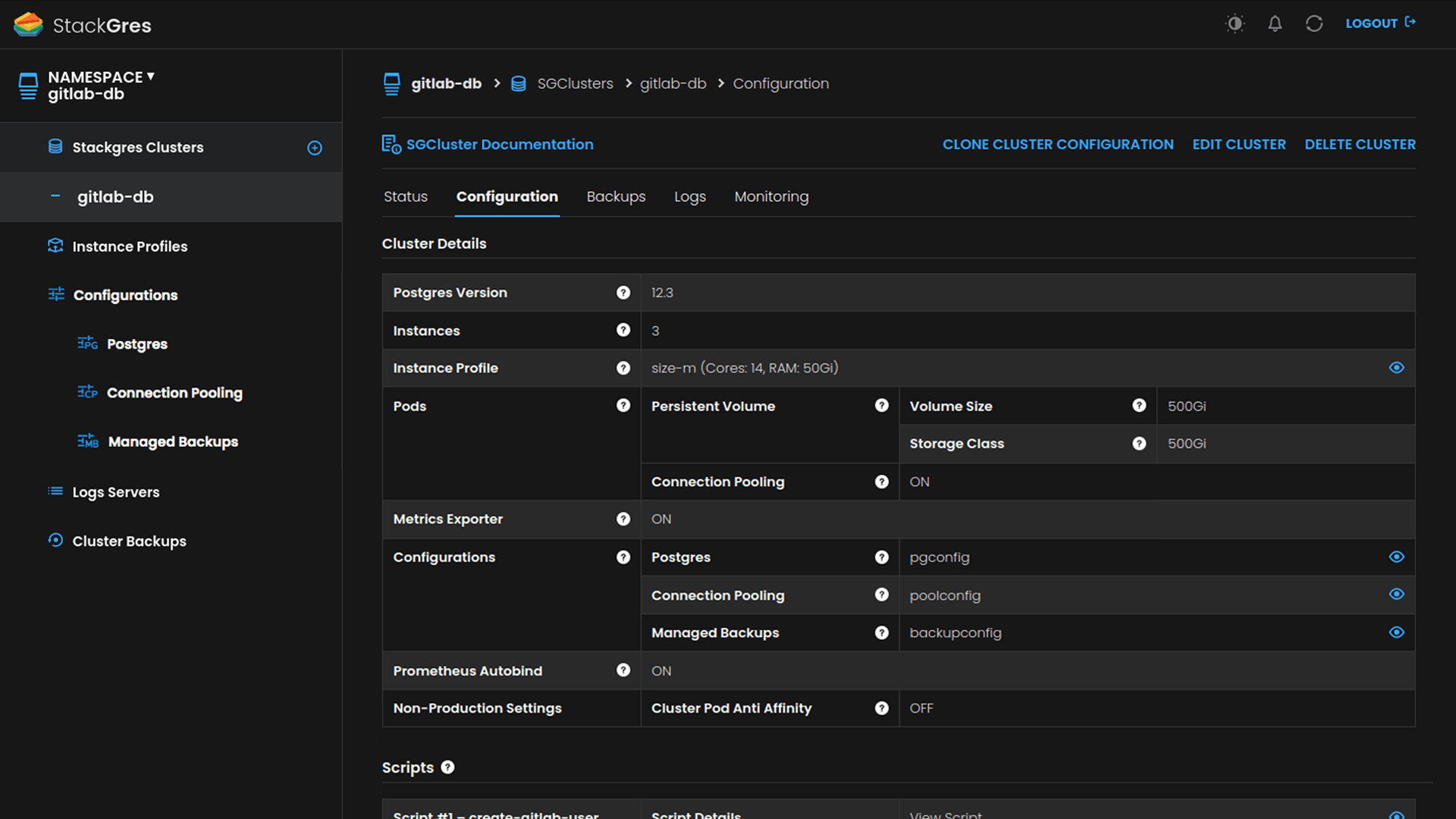

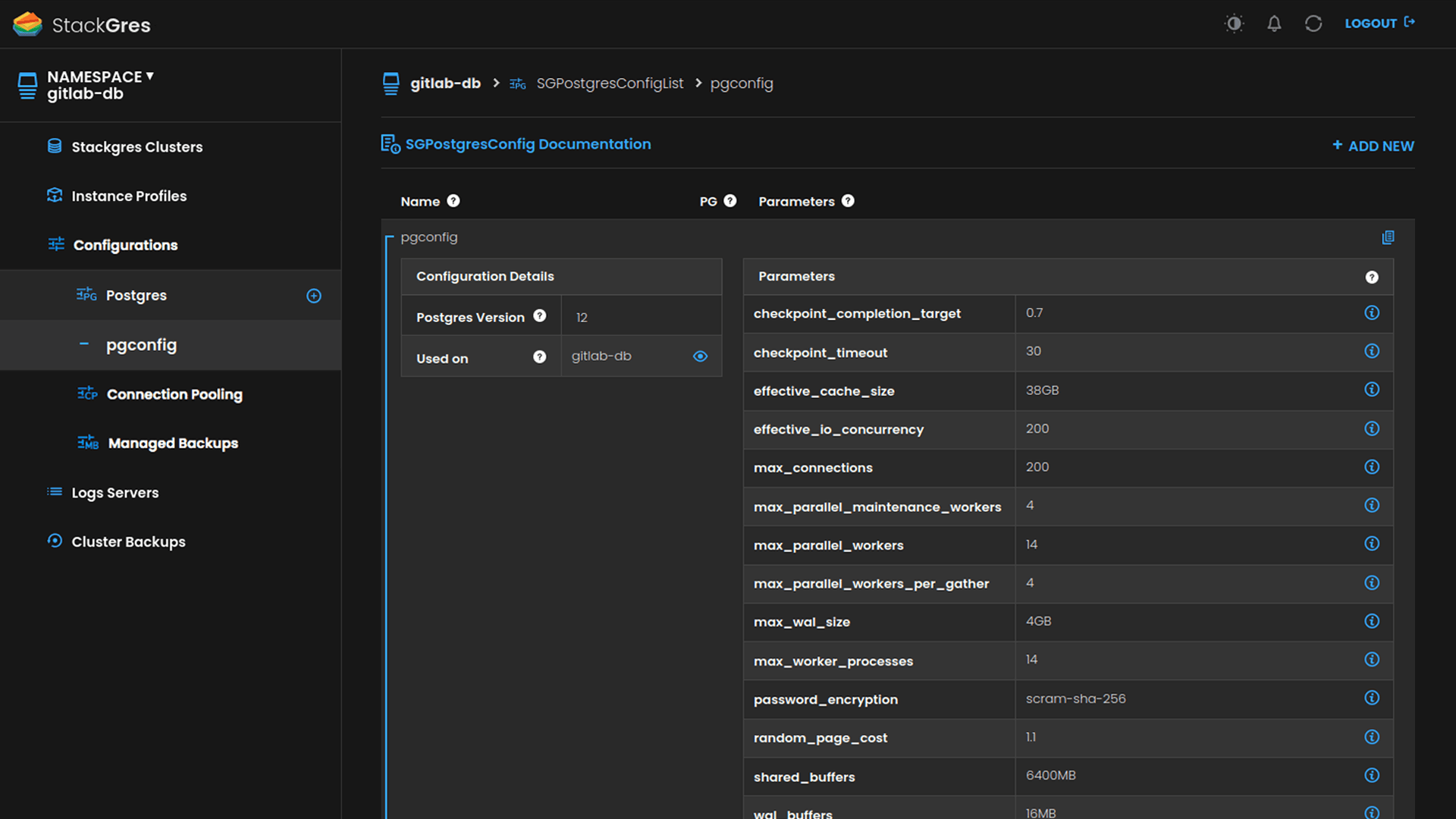

For advanced Postgres users, it allows you to further customize the components and configurations. Configurations are fully validated, not a simple ConfigMap that may break your cluster if you set it wrongly. See for example how to tune Postgres or Connection Pool configurations. As for Kubernetes, you can customize the services exposed, the pod’s labels and node tolerations, among many others.

You are in full control.

Learn more - 02

Automated failover and High Availability with Patroni

StackGres integrates the most renowned and production-tested high availability software for Postgres: Patroni.

It’s fully integrated, there’s nothing else to do. If any pod, any node, anything fails, in a matter of seconds the cluster will reheal automatically, without human intervention.

StackGres exposes one read-write (always pointing to the master) and one read-only (load balancer distributing load to replicas) connection for the applications, that will automatically be updated after any disruptive event happens.

Learn more - 03

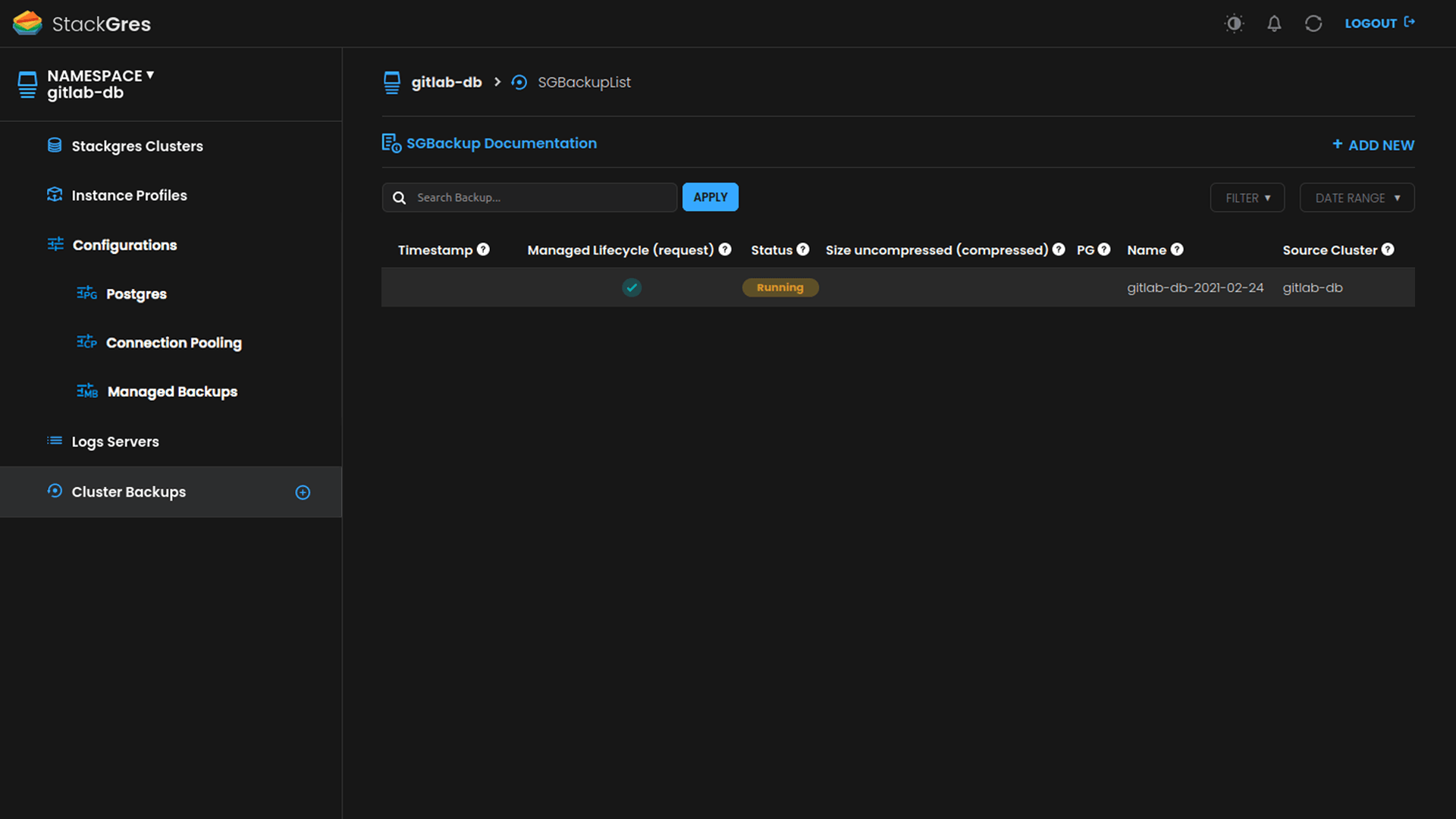

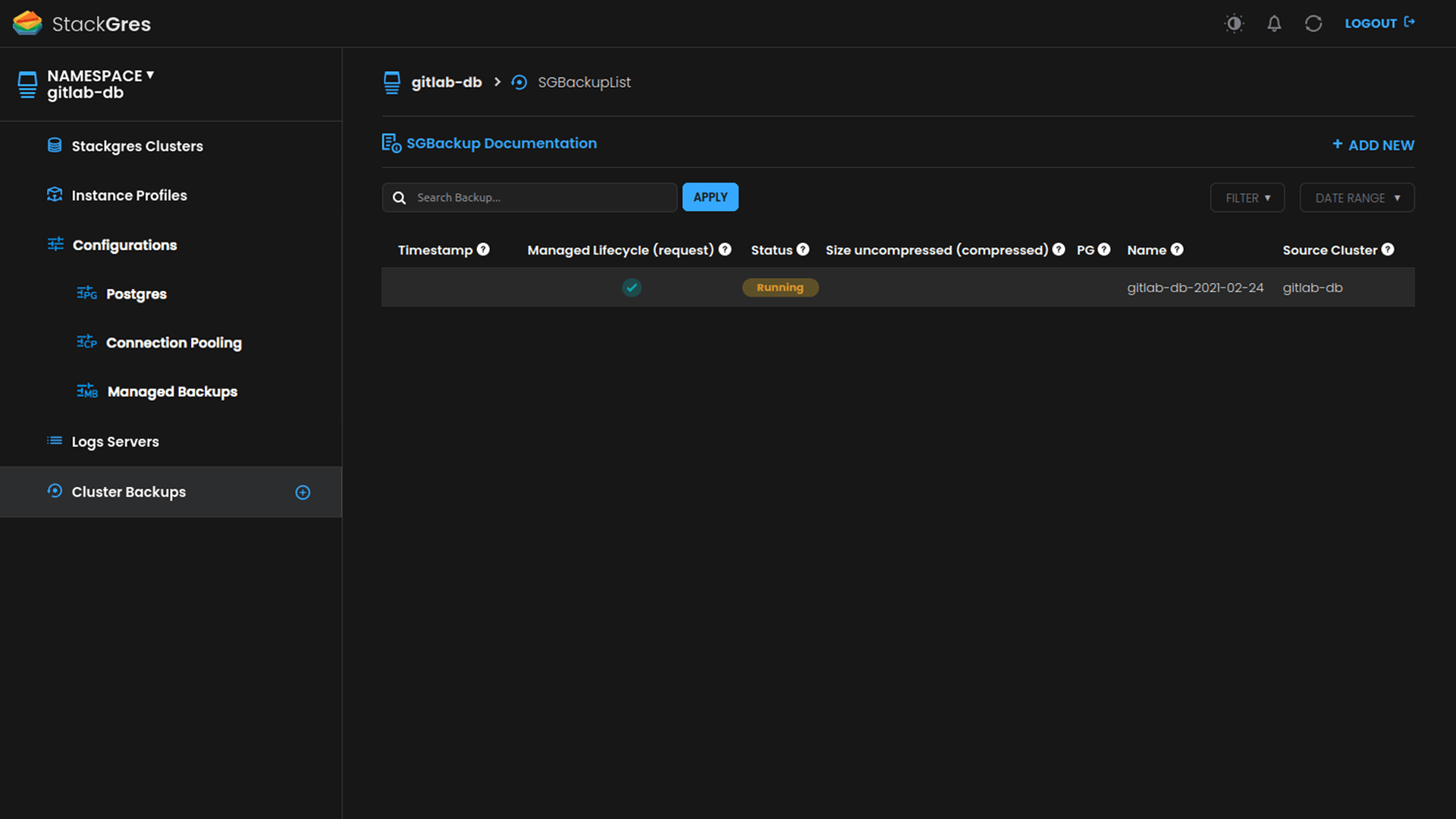

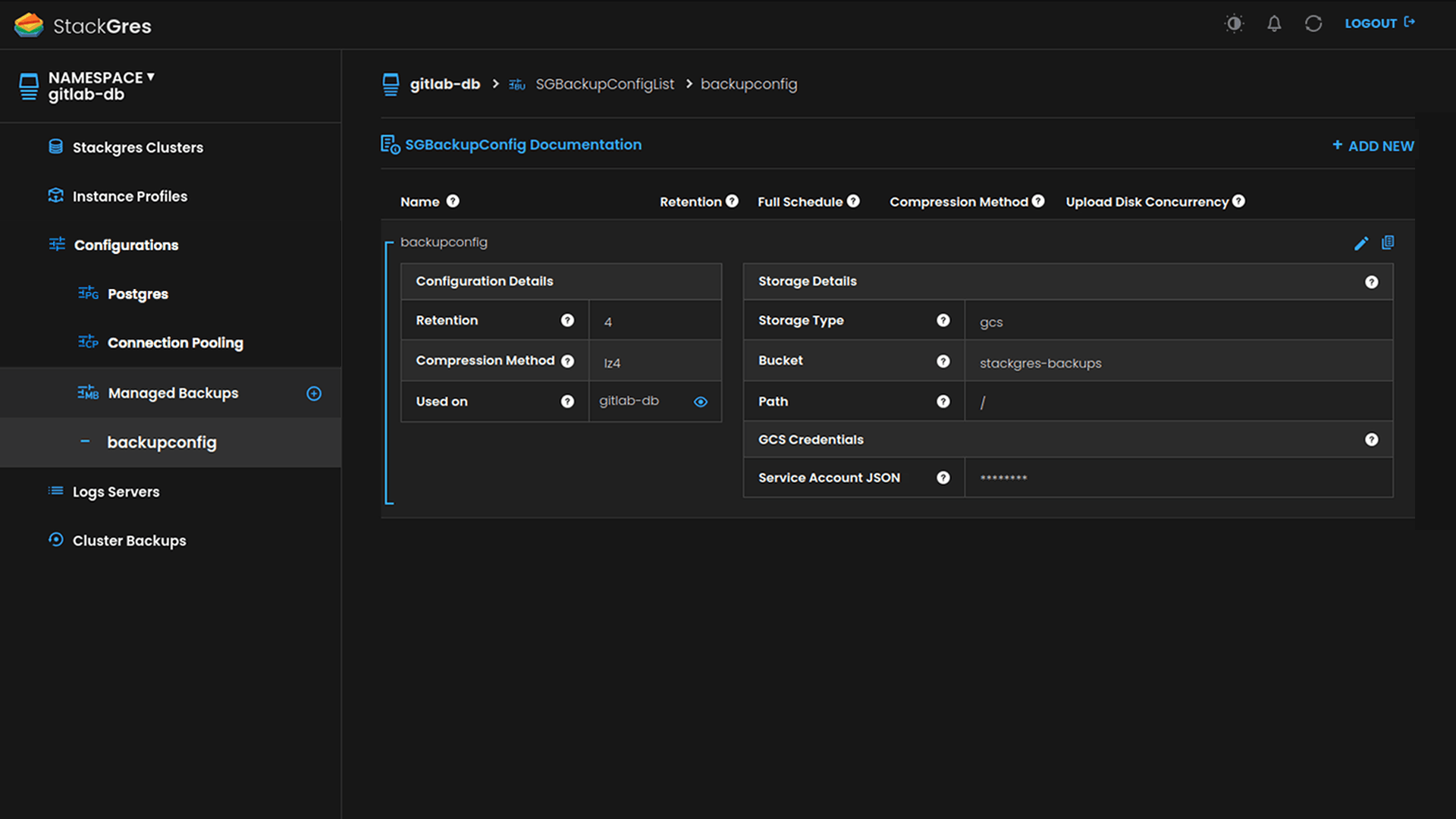

Automated backups, lifecycle management

Backups are a critical part of a database, and are key to any Disaster Recovery strategy. StackGres includes backups based on continuous archiving, which allows for zero data loss recovery and PITR (restore a database into an arbitrary past instant of time).

StackGres also provides automated lifecycle management of the backups. And backups are always stored in the most durable media available today: cloud object storage like Amazon’s S3, Google Cloud Storage or Azure Blob. If you are running on prem, you can use Minio or other S3-compatible software to store your backups.

Just provide your bucket access information and credentials, configure the retention window, and everything else is fully transparent and automated. You can also create manual backups with a simple YAML file at any time.

Learn more - 04

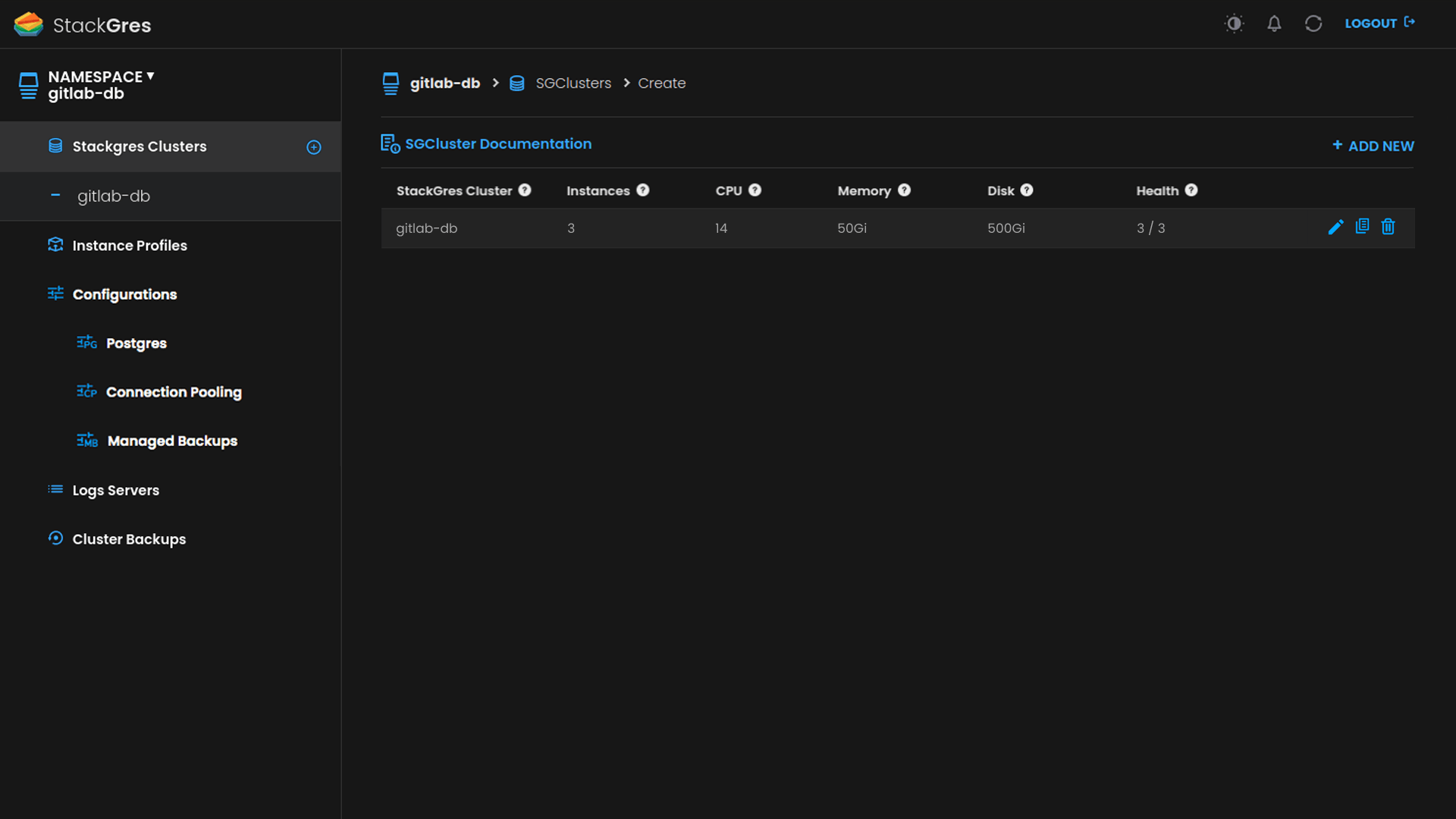

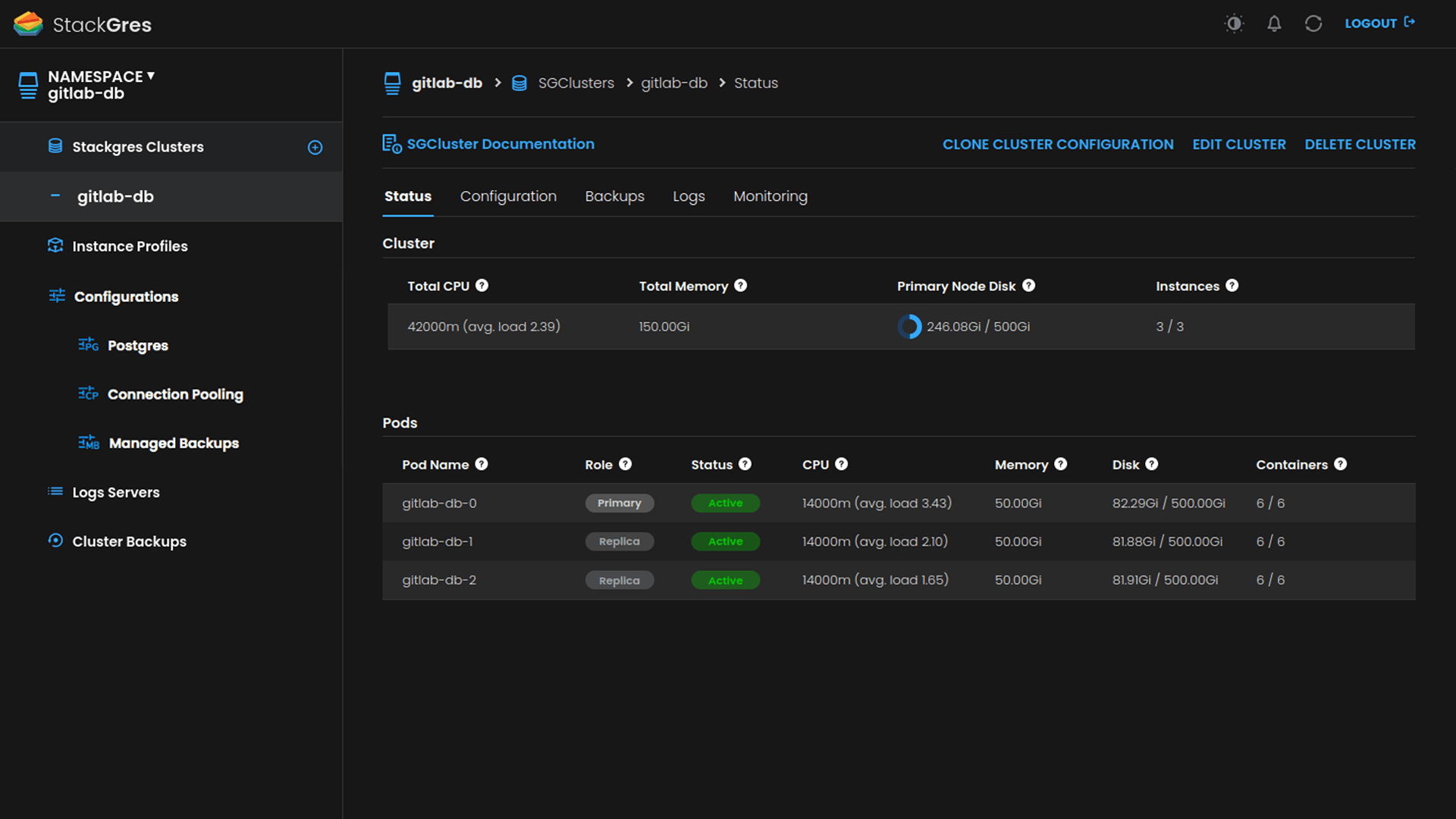

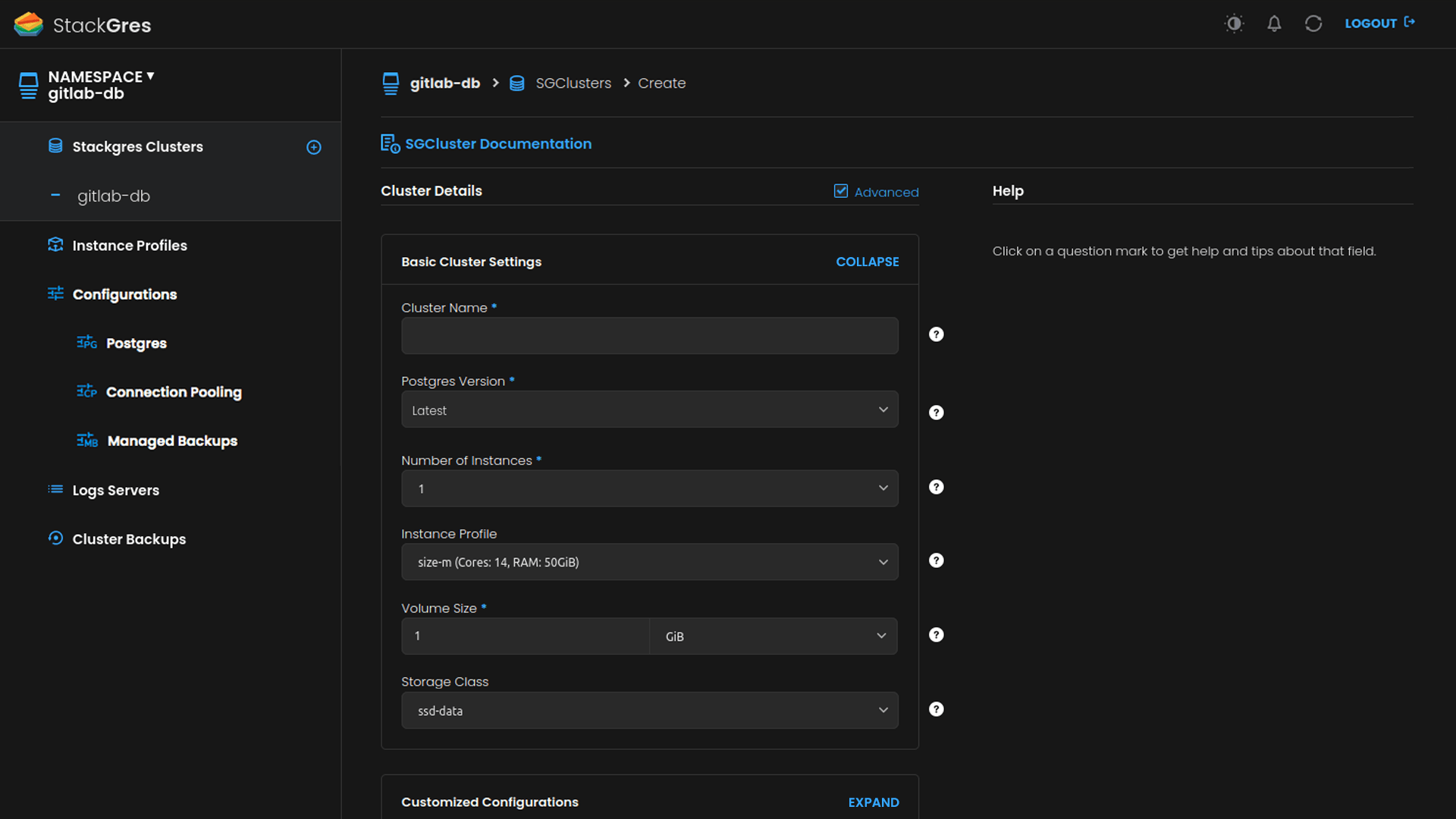

Fully-featured management Web Console

All batteries included. StackGres comes with a fully-featured web console that allows you to read any information and perform any operation that you could do with kubectl and the provided CRDs.

You just need to decide how to expose it (e.g. as a LoadBalancer) and get the default admin credentials. Learn more. Moreover, the web console relies on Kubernetes RBAC for user authentication, so you can trivially give access and permissions to any Kubernetes existing user.

Oh, and supports both light and dark modes!

Learn more - 05

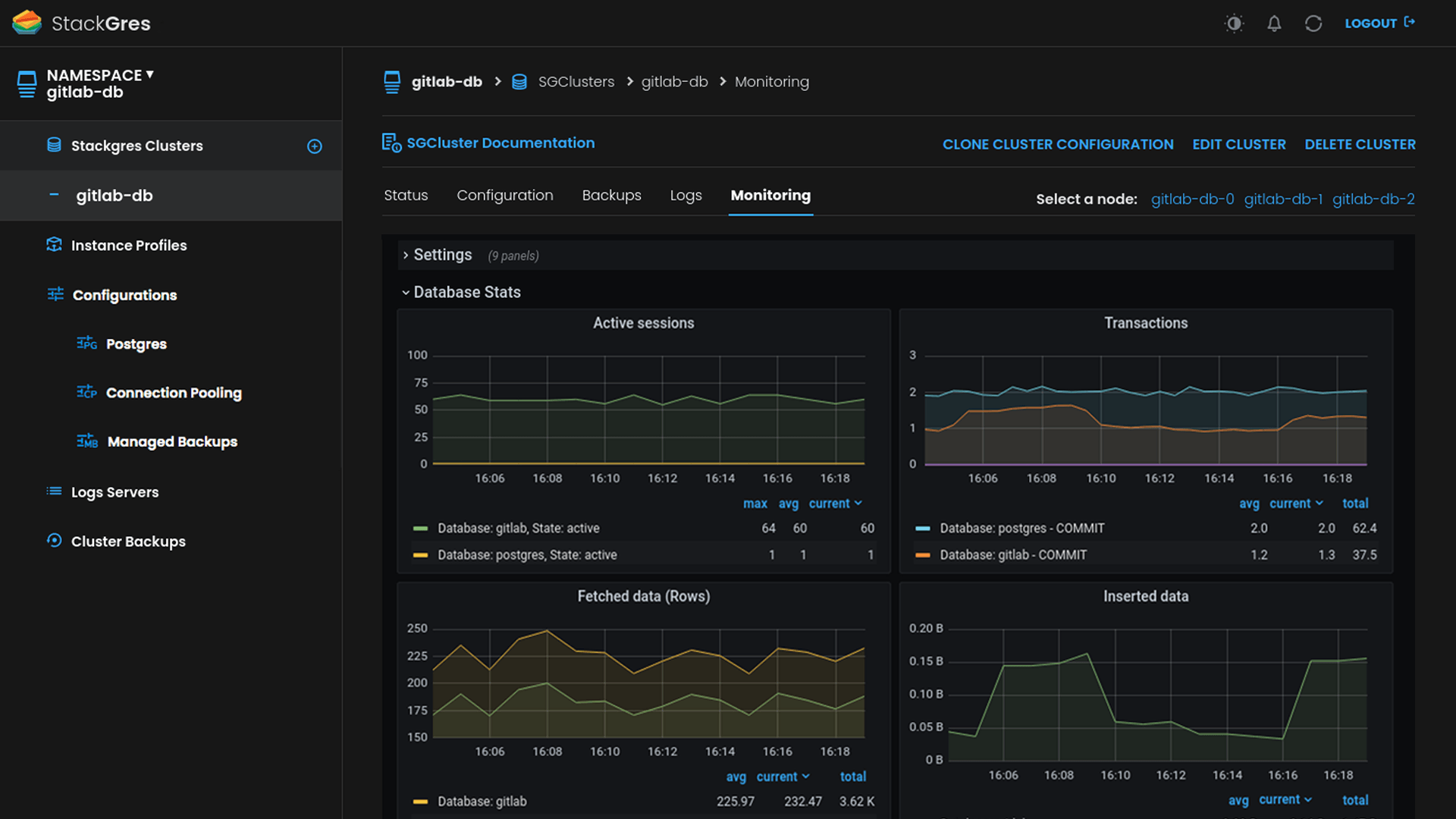

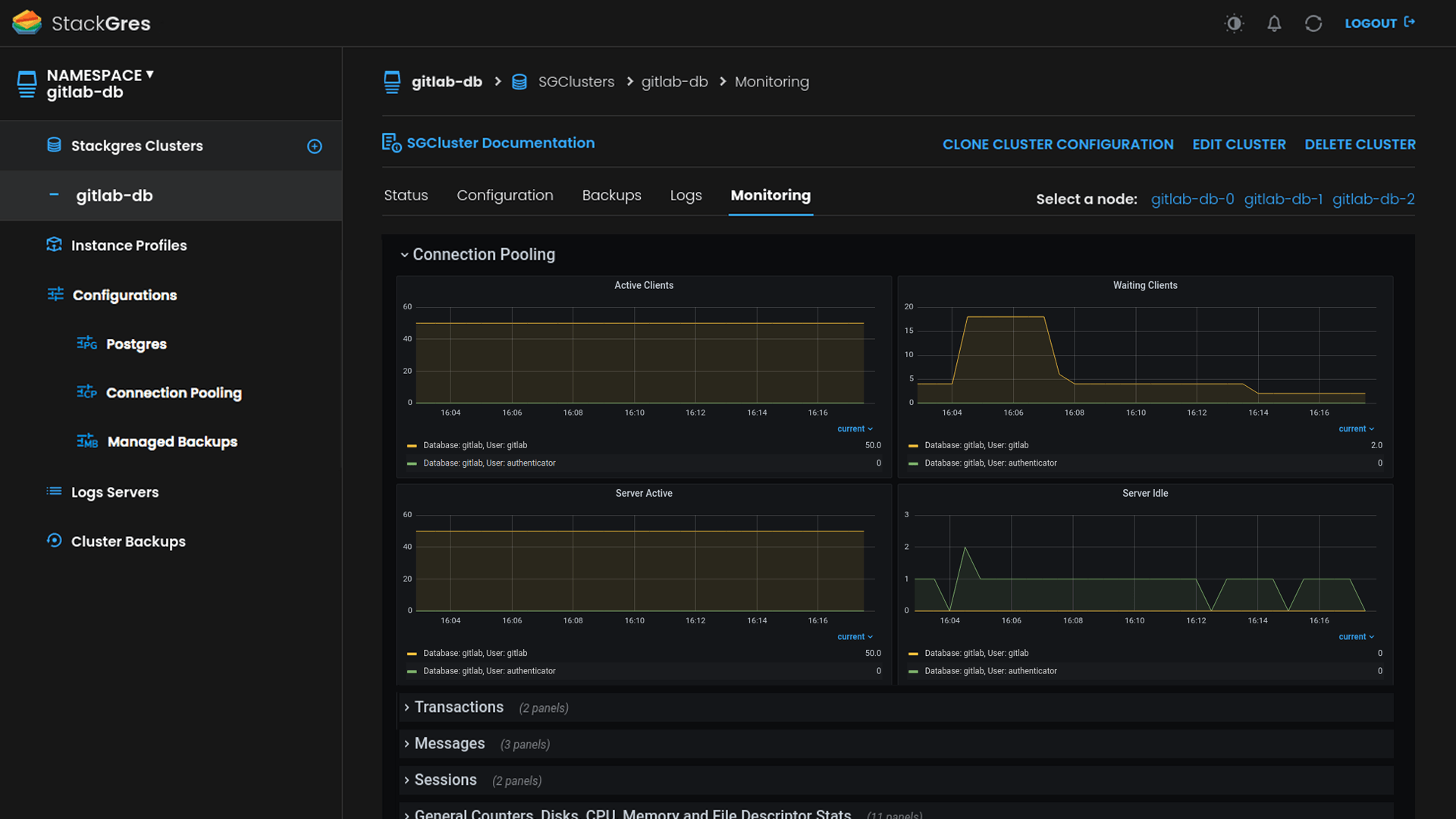

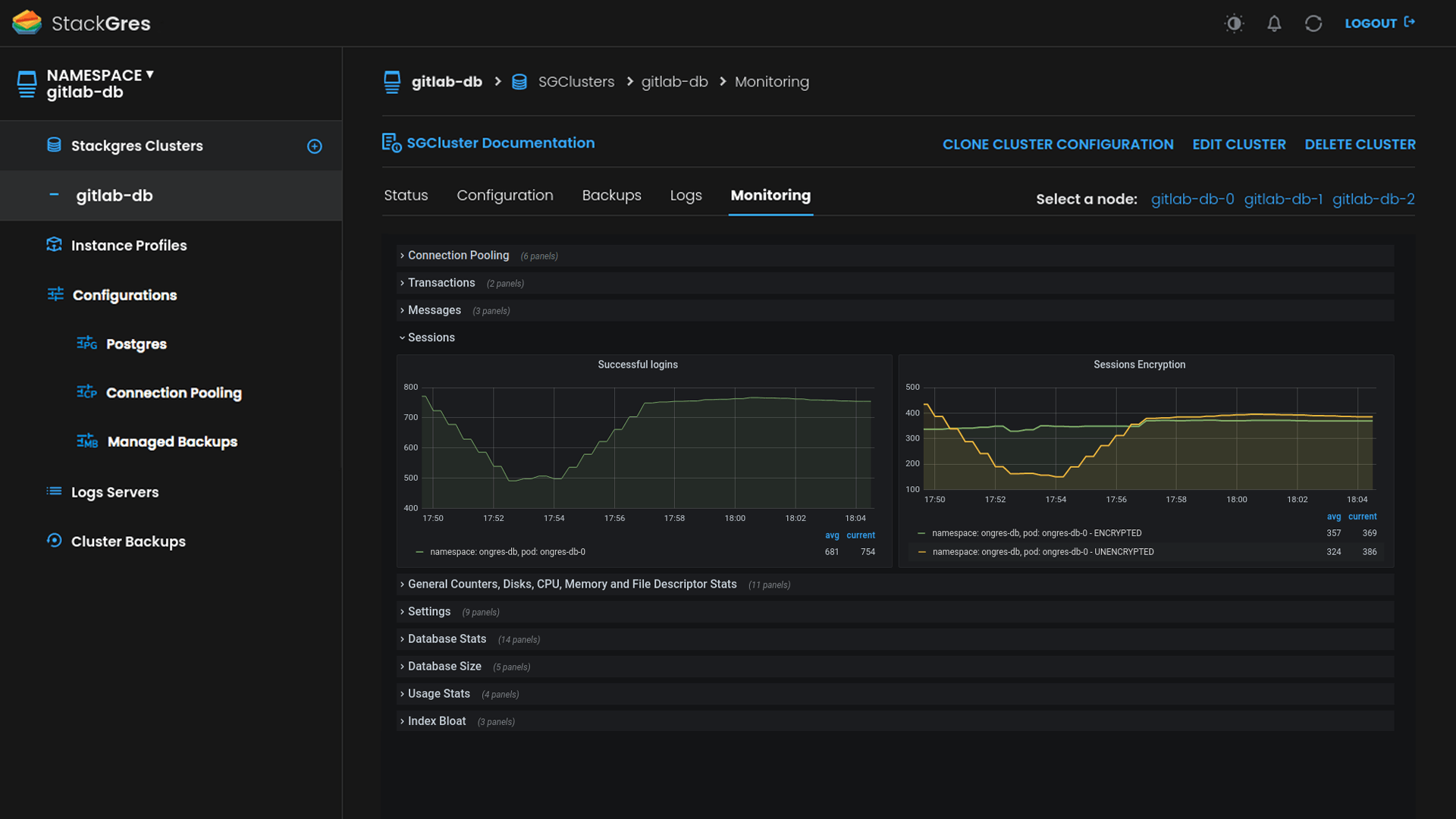

Automatic Prometheus integration. Built-in customized Grafana dashboards

If you have Prometheus Operator installed in your Kubernetes, you are just a click or a boolean flag in a YAML file away from having fully automatic integration with Prometheus (see prometheusAutobind). Check the Helm values that you may specify when installing StackGres to also autodetect Grafana, and you are ready to go!

You will get all the metrics from the clusters in Prometheus. You will also get StackGres predefined custom Grafana dashboards, curated by expert Postgres DBAs, all integrated into the web console.

- 06

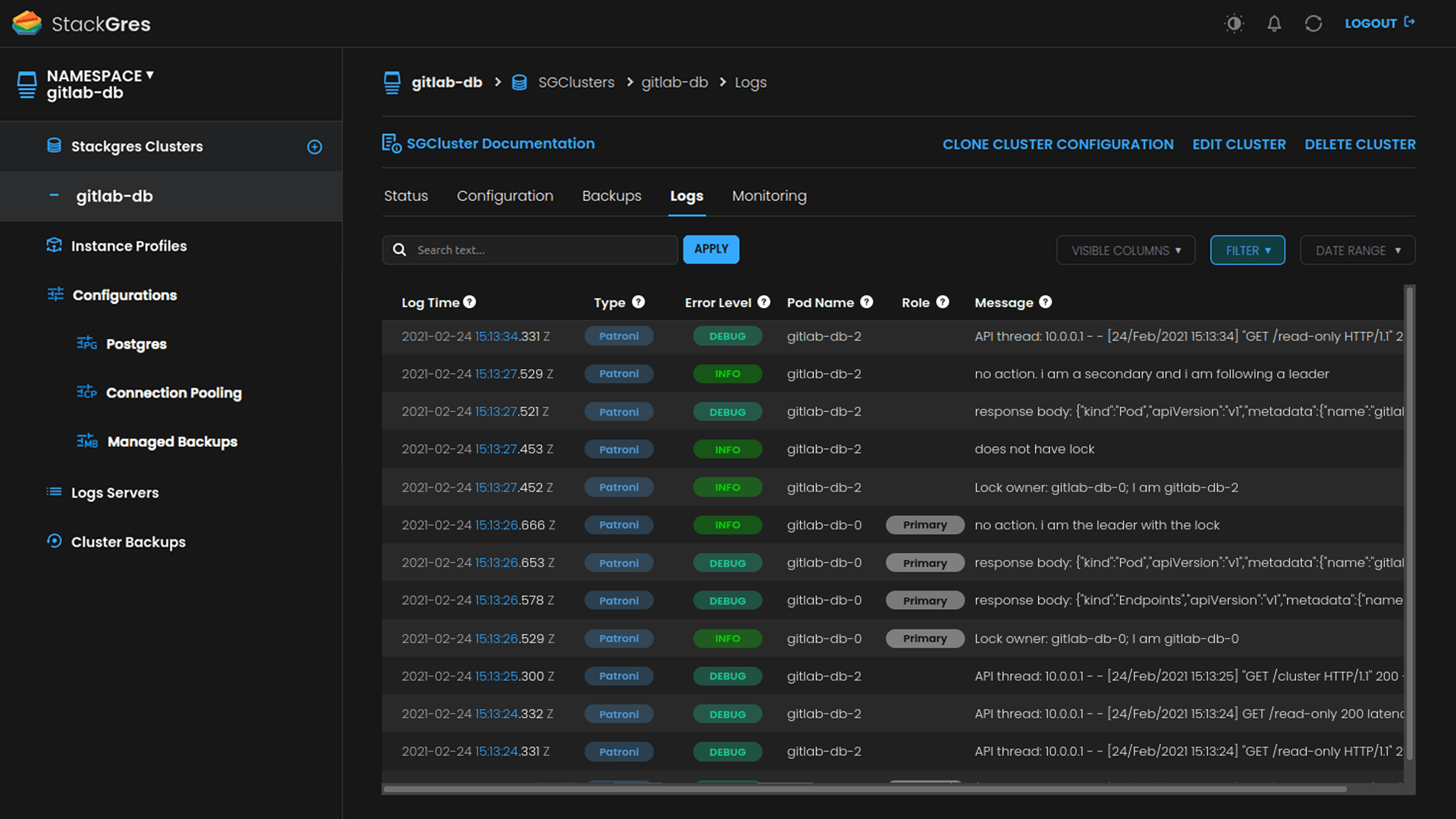

Distributed logs for Postgres and Patroni

Tired of typing kubectl exec into each and one of the N pods of your cluster, to then grep+awk the Postgres logs to get the information you are looking for? There’s a better way with StackGres.

Query the logs with SQL, from a centralized location! StackGres supports centralized, distributed logs. With a simple YAML-based CRD, or from the web console, you can create a Distributed Log Cluster in seconds. Then reference it from the cluster(s) you create.

Both Postgres and Patroni logs will be captured via a FluentBit sidecar, which will forward them to the distributed log server. It contains in turn a Fluentd collector that forwards the logs to a dedicated Postgres database! To support high log volume ingestion, this log-dedicated database is enhanced via the great TimescaleDB extension (open source version), on which StackGres also relies to perform log retention policies.

Query your logs with SQL to unleash the full DBA potential. Or visualize them on the web console, which includes search and filter capabilities. All logs are enhanced with very rich metadata. Postgres troubleshooting, we’ve got you covered.

Learn more - 07

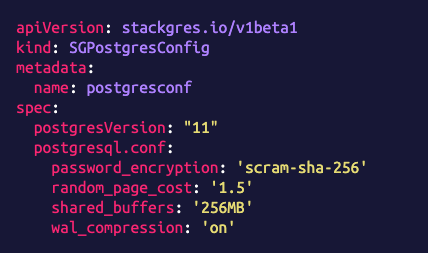

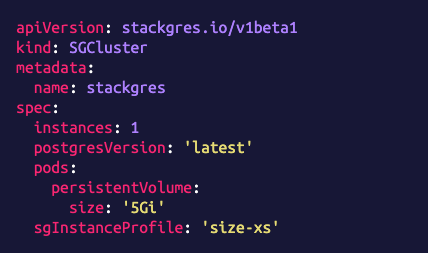

High-level management CRDs. GitOps ready

No need to install any client or additional tool to manage StackGres. StackGres is completely CRD-driven (check the CRD Reference). Your requests are encoded in the spec section of the CRDs, and any result information is provided in the status section of the CRDs.

Moreover, the CRDs are designed to be very high level, and abstract away (hide) all Postgres complexities. With StackGres, if you know kubectl and CRDs, you have become a Postgres expert too.

Being CRDs the StackGres interface, and not requiring any external tool, enables you to have all your Postgres clusters coded as IaC (Infrastructure as Code), and potentially versioned control on Git. This further enables GitOps, where your clusters can be managed automatically when you commit changes to the CRDs on the Git repository.

Yet if visual UI is preferred over YAML files and command line, every action that you can query or perform with the CRDs, can be also performed from the web console. Likewise, any action performed from the web console will be automatically reflected in the CRDs. Choice is yours.

Learn more - 08

Integrated server-side connection pooling

Due to the Postgres process model, it is highly advisable to have connection pooling in most production scenarios. Yet it is not an easy topic.

StackGres comes by default with integrated server-side connection pooling. It is deployed as a sidecar to the Postgres container. Server-side pooling enables controlling the connections fan-in, that is, the incoming connections to Postgres, and making sure Postgres is not overwhelmed with traffic that may cause significant performance degradation.

You can tune the low-level configuration or even entirely disable connection pooling (see PoolConfig CRD), and of course manage it from the web console.

StackGres also exports relevant connection pooling metrics to Prometheus, and specialized dashboards are shown in the Grafana integrated into the web console.

Learn more - 09

Enhanced observability via Envoy Proxy’s Postgres filter

The OnGres team developed, in collaboration with the Envoy Community, the first Postgres filter for Envoy. Learn more

This Envoy Postgres filter provides enhanced observability by decoding the Postgres wire protocol and sending metrics to Prometheus. Not only metrics that are unavailable at the Postgres level are added, but also they have zero impact on Postgres: they are gathered at the proxy level, and this process is fully transparent to Postgres.

StackGres utilizes an Envoy sidecar to transparently proxy all Postgres traffic. So there’s nothing to do, as long as you have Prometheus in your Kubernetes, Envoy will send additional metrics. And StackGres web console already includes built-in Grafana dashboards to visualize these metrics.

Learn more - 10

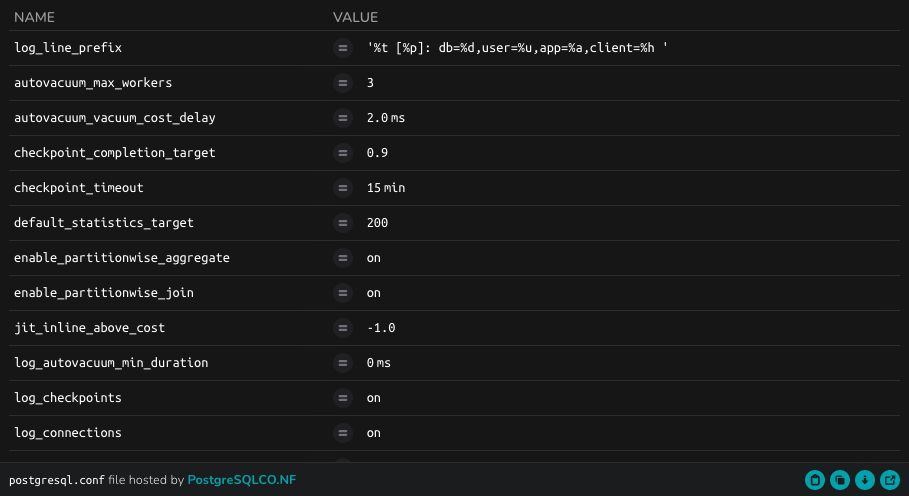

Expertly tuned by default

At OnGres, we’re obsessed with tuning Postgres adequately. So much that we have built postgresqlCO.NF, a website that helps hundreds of thousands of Postgres users on how to better tune their database.

StackGres clusters will be created with a carefully tuned initial Postgres configuration, curated by our highly expert Postgres DBA team. If you are not a Postgres advanced user, you will be covered well enough with this default configuration. If you prefer to tune it yourself, you can create one or more PostgresConfig CRD and reference them for your cluster(s).

With StackGres, you don’t need to be a Postgres to operate production-ready clusters.

- 11

Lightweight, secure container images based on RedHat’s UBI 8

All StackGres container images are built on the Red Hat Universal Base Image (UBI) version 8, which is derived from RHEL 8. UBI8 are optimized container images, of a minimal size. With the reliability, stability and long-term support roadmap of RHEL. UBI8 is free to use, and is fully supported by Red Hat if you have a RHEL support contract.